kwimage package¶

Subpackages¶

Submodules¶

Module contents¶

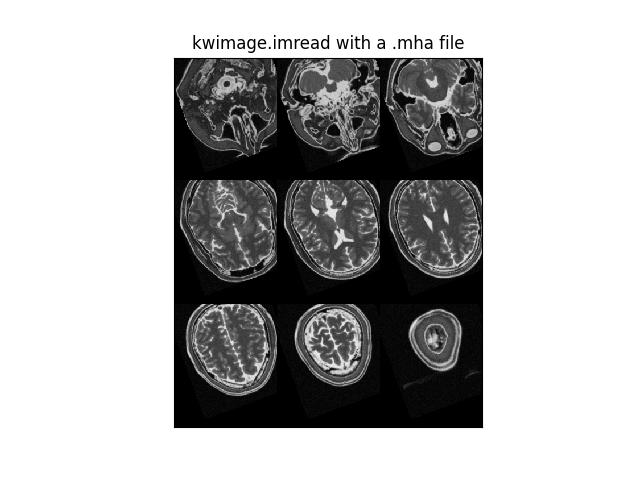

The Kitware Image Module (kwimage) contains functions to accomplish lower-level image operations via a high level API.

- class kwimage.Affine(matrix)[source]¶

Bases:

ProjectiveA thin wraper around a 3x3 matrix that represents an affine transform

- Implements methods for:

creating random affine transforms

decomposing the matrix

finding a best-fit transform between corresponding points

TODO: - [ ] fully rational transform

Example

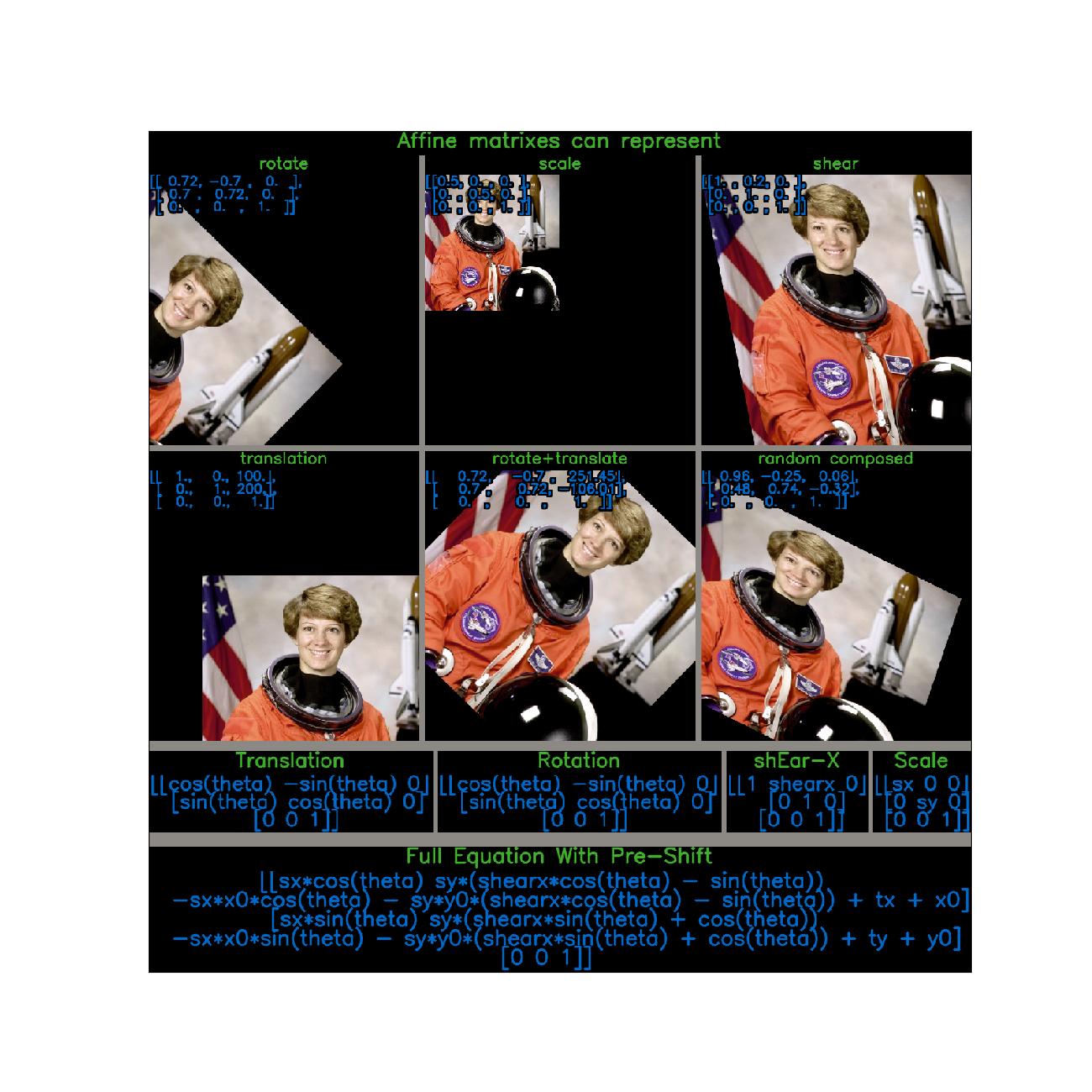

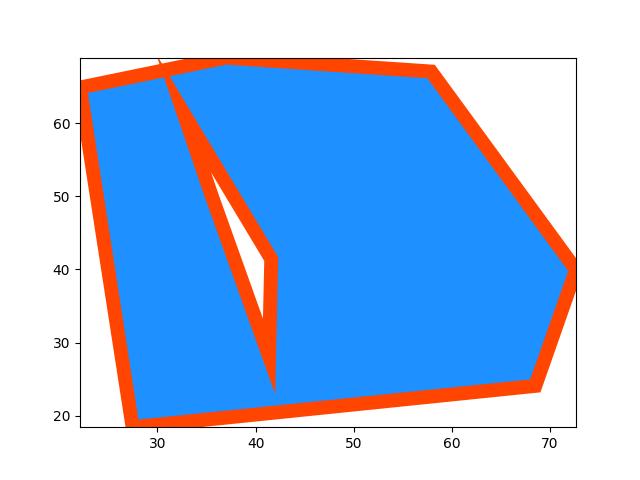

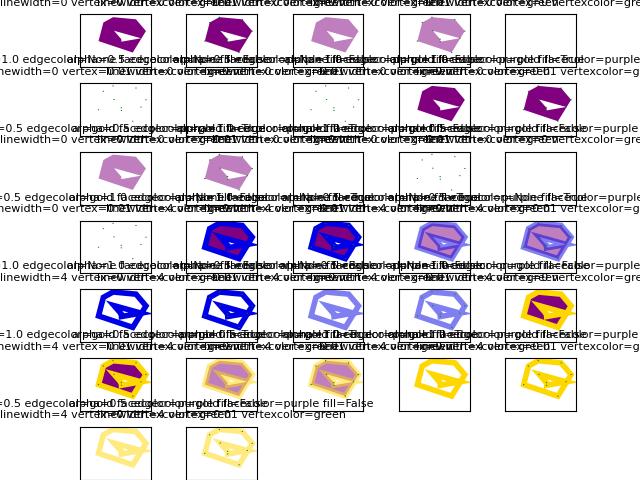

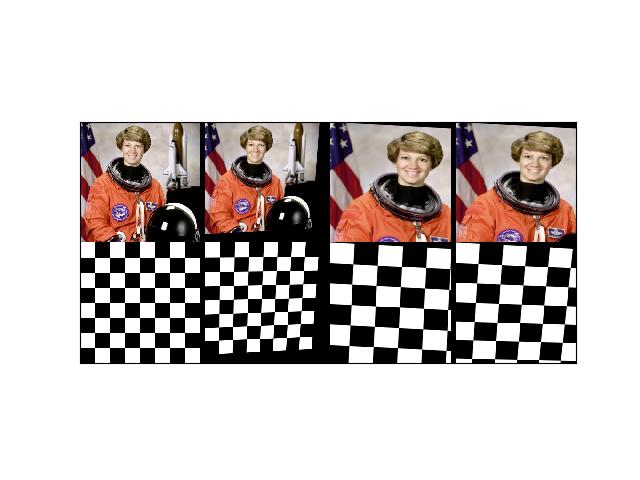

>>> import kwimage >>> import math >>> image = kwimage.grab_test_image() >>> theta = 0.123 * math.tau >>> components = { >>> 'rotate': kwimage.Affine.affine(theta=theta), >>> 'scale': kwimage.Affine.affine(scale=0.5), >>> 'shear': kwimage.Affine.affine(shearx=0.2), >>> 'translation': kwimage.Affine.affine(offset=(100, 200)), >>> 'rotate+translate': kwimage.Affine.affine(theta=0.123 * math.tau, about=(256, 256)), >>> 'random composed': kwimage.Affine.random(scale=(0.5, 1.5), translate=(-20, 20), theta=(-theta, theta), shearx=(0, .4), rng=900558176210808600), >>> } >>> warp_stack = [] >>> for key, aff in components.items(): ... warp = kwimage.warp_affine(image, aff) ... warp = kwimage.draw_text_on_image( ... warp, ... ub.repr2(aff.matrix, nl=1, nobr=1, precision=2, si=1, sv=1, with_dtype=0), ... org=(1, 1), ... valign='top', halign='left', ... fontScale=0.8, color='kw_blue', ... border={'thickness': 3}, ... ) ... warp = kwimage.draw_header_text(warp, key, color='kw_green') ... warp_stack.append(warp) >>> warp_canvas = kwimage.stack_images_grid(warp_stack, chunksize=3, pad=10, bg_value='kitware_gray') >>> # xdoctest: +REQUIRES(module:sympy) >>> import sympy >>> # Shows the symbolic construction of the code >>> # https://groups.google.com/forum/#!topic/sympy/k1HnZK_bNNA >>> from sympy.abc import theta >>> params = x0, y0, sx, sy, theta, shearx, tx, ty = sympy.symbols( >>> 'x0, y0, sx, sy, theta, shearx, tx, ty') >>> theta = sympy.symbols('theta') >>> # move the center to 0, 0 >>> tr1_ = np.array([[1, 0, -x0], >>> [0, 1, -y0], >>> [0, 0, 1]]) >>> # Define core components of the affine transform >>> S = np.array([ # scale >>> [sx, 0, 0], >>> [ 0, sy, 0], >>> [ 0, 0, 1]]) >>> E = np.array([ # x-shear >>> [1, shearx, 0], >>> [0, 1, 0], >>> [0, 0, 1]]) >>> R = np.array([ # rotation >>> [sympy.cos(theta), -sympy.sin(theta), 0], >>> [sympy.sin(theta), sympy.cos(theta), 0], >>> [ 0, 0, 1]]) >>> T = np.array([ # translation >>> [ 1, 0, tx], >>> [ 0, 1, ty], >>> [ 0, 0, 1]]) >>> # Contruct the affine 3x3 about the origin >>> aff0 = np.array(sympy.simplify(T @ R @ E @ S)) >>> # move 0, 0 back to the specified origin >>> tr2_ = np.array([[1, 0, x0], >>> [0, 1, y0], >>> [0, 0, 1]]) >>> # combine transformations >>> aff = tr2_ @ aff0 @ tr1_ >>> print('aff = {}'.format(ub.repr2(aff.tolist(), nl=1))) >>> # This could be prettier >>> texts = { >>> 'Translation': sympy.pretty(R), >>> 'Rotation': sympy.pretty(R), >>> 'shEar-X': sympy.pretty(E), >>> 'Scale': sympy.pretty(S), >>> } >>> print(ub.repr2(texts, nl=2, sv=1)) >>> equation_stack = [] >>> for text, m in texts.items(): >>> render_canvas = kwimage.draw_text_on_image(None, m, color='kw_blue', fontScale=1.0) >>> render_canvas = kwimage.draw_header_text(render_canvas, text, color='kw_green') >>> render_canvas = kwimage.imresize(render_canvas, scale=1.3) >>> equation_stack.append(render_canvas) >>> equation_canvas = kwimage.stack_images(equation_stack, pad=10, axis=1, bg_value='kitware_gray') >>> render_canvas = kwimage.draw_text_on_image(None, sympy.pretty(aff), color='kw_blue', fontScale=1.0) >>> render_canvas = kwimage.draw_header_text(render_canvas, 'Full Equation With Pre-Shift', color='kw_green') >>> # xdoctest: -REQUIRES(module:sympy) >>> # xdoctest: +REQUIRES(--show) >>> import kwplot >>> plt = kwplot.autoplt() >>> canvas = kwimage.stack_images([warp_canvas, equation_canvas, render_canvas], pad=20, axis=0, bg_value='kitware_gray', resize='larger') >>> canvas = kwimage.draw_header_text(canvas, 'Affine matrixes can represent', color='kw_green') >>> kwplot.imshow(canvas) >>> fig = plt.gcf() >>> fig.set_size_inches(13, 13)

Example

>>> import kwimage >>> self = kwimage.Affine(np.eye(3)) >>> m1 = np.eye(3) @ self >>> m2 = self @ np.eye(3)

Example

>>> from kwimage.transform import * # NOQA >>> m = {} >>> # Works, and returns a Affine >>> m[len(m)] = x = Affine.random() @ np.eye(3) >>> assert isinstance(x, Affine) >>> m[len(m)] = x = Affine.random() @ None >>> assert isinstance(x, Affine) >>> # Works, and returns an ndarray >>> m[len(m)] = x = np.eye(3) @ Affine.random() >>> assert isinstance(x, np.ndarray) >>> # Works, and returns an Matrix >>> m[len(m)] = x = Affine.random() @ Matrix.random(3) >>> assert isinstance(x, Matrix) >>> m[len(m)] = x = Matrix.random(3) @ Affine.random() >>> assert isinstance(x, Matrix) >>> print('m = {}'.format(ub.repr2(m)))

- property shape¶

- concise()[source]¶

Return a concise coercable dictionary representation of this matrix

- Returns

- a small serializable dict that can be passed

to

Affine.coerce()to reconstruct this object.

- Return type

- Returns

dictionary with consise parameters

- Return type

Dict

Example

>>> import kwimage >>> self = kwimage.Affine.random(rng=0, scale=1) >>> params = self.concise() >>> assert np.allclose(Affine.coerce(params).matrix, self.matrix) >>> print('params = {}'.format(ub.repr2(params, nl=1, precision=2))) params = { 'offset': (0.08, 0.38), 'theta': 0.08, 'type': 'affine', }

Example

>>> import kwimage >>> self = kwimage.Affine.random(rng=0, scale=2, offset=0) >>> params = self.concise() >>> assert np.allclose(Affine.coerce(params).matrix, self.matrix) >>> print('params = {}'.format(ub.repr2(params, nl=1, precision=2))) params = { 'scale': 2.00, 'theta': 0.04, 'type': 'affine', }

- classmethod coerce(data=None, **kwargs)[source]¶

Attempt to coerce the data into an affine object

- Parameters

data – some data we attempt to coerce to an Affine matrix

**kwargs – some data we attempt to coerce to an Affine matrix, mutually exclusive with data.

- Returns

Affine

Example

>>> import kwimage >>> kwimage.Affine.coerce({'type': 'affine', 'matrix': [[1, 0, 0], [0, 1, 0]]}) >>> kwimage.Affine.coerce({'scale': 2}) >>> kwimage.Affine.coerce({'offset': 3}) >>> kwimage.Affine.coerce(np.eye(3)) >>> kwimage.Affine.coerce(None) >>> kwimage.Affine.coerce(skimage.transform.AffineTransform(scale=30))

- eccentricity()[source]¶

Eccentricity of the ellipse formed by this affine matrix

- Returns

- large when there are big scale differences in principle

directions or skews.

- Return type

References

https://en.wikipedia.org/wiki/Conic_section https://github.com/rasterio/affine/blob/78c20a0cfbb5ec/affine/__init__.py#L368

Example

>>> import kwimage >>> kwimage.Affine.random(rng=432).eccentricity()

- to_shapely()[source]¶

Returns a matrix suitable for shapely.affinity.affine_transform

- Returns

Tuple[float, float, float, float, float, float]

Example

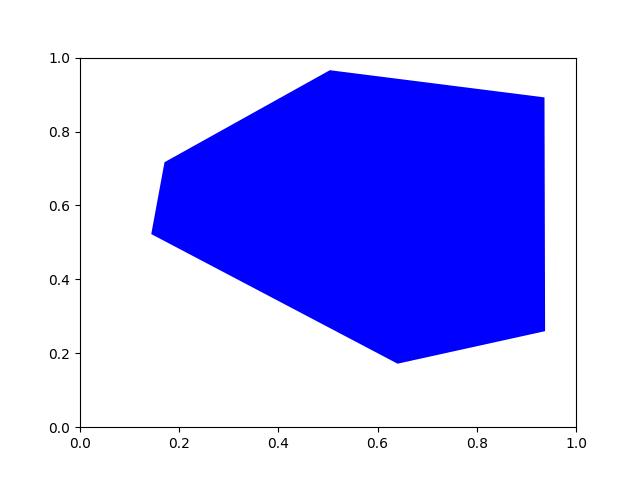

>>> import kwimage >>> self = kwimage.Affine.random() >>> sh_transform = self.to_shapely() >>> # Transform points with kwimage and shapley >>> import shapely >>> from shapely.affinity import affine_transform >>> kw_poly = kwimage.Polygon.random() >>> kw_warp_poly = kw_poly.warp(self) >>> sh_poly = kw_poly.to_shapely() >>> sh_warp_poly = affine_transform(sh_poly, sh_transform) >>> kw_warp_poly_recon = kwimage.Polygon.from_shapely(sh_warp_poly) >>> assert np.allclose(kw_warp_poly_recon.exterior.data, kw_warp_poly_recon.exterior.data)

- to_skimage()[source]¶

- Returns

skimage.transform.AffineTransform

Example

>>> import kwimage >>> self = kwimage.Affine.random() >>> tf = self.to_skimage() >>> # Transform points with kwimage and scikit-image >>> kw_poly = kwimage.Polygon.random() >>> kw_warp_xy = kw_poly.warp(self.matrix).exterior.data >>> sk_warp_xy = tf(kw_poly.exterior.data) >>> assert np.allclose(sk_warp_xy, sk_warp_xy)

- classmethod scale(scale)[source]¶

Create a scale Affine object

- Parameters

scale (float | Tuple[float, float]) – x, y scale factor

- Returns

Affine

- classmethod translate(offset)[source]¶

Create a translation Affine object

- Parameters

offset (float | Tuple[float, float]) – x, y translation factor

- Returns

Affine

Benchmark

>>> # xdoctest: +REQUIRES(--benchmark) >>> # It is ~3x faster to use the more specific method >>> import timerit >>> import kwimage >>> # >>> offset = np.random.rand(2) >>> ti = timerit.Timerit(100, bestof=10, verbose=2) >>> for timer in ti.reset('time'): >>> with timer: >>> kwimage.Affine.translate(offset) >>> # >>> for timer in ti.reset('time'): >>> with timer: >>> kwimage.Affine.affine(offset=offset)

- classmethod rotate(theta)[source]¶

Create a rotation Affine object

- Parameters

theta (float) – counter-clockwise rotation angle in radians

- Returns

Affine

- classmethod random(shape=None, rng=None, **kw)[source]¶

Create a random Affine object

- Parameters

rng – random number generator

**kw – passed to

Affine.random_params(). can contain coercable random distributions for scale, offset, about, theta, and shearx.

- Returns

Affine

- classmethod random_params(rng=None, **kw)[source]¶

- Parameters

rng – random number generator

**kw – can contain coercable random distributions for scale, offset, about, theta, and shearx.

- Returns

affine parameters suitable to be passed to Affine.affine

- Return type

Dict

Todo

[ ] improve kwargs parameterization

- decompose()[source]¶

Decompose the affine matrix into its individual scale, translation, rotation, and skew parameters.

- Returns

decomposed offset, scale, theta, and shearx params

- Return type

Dict

References

https://math.stackexchange.com/questions/612006/decompose-affine https://math.stackexchange.com/a/3521141/353527 https://stackoverflow.com/questions/70357473/how-to-decompose-a-2x2-affine-matrix-with-sympy https://en.wikipedia.org/wiki/Transformation_matrix https://en.wikipedia.org/wiki/Shear_mapping

Example

>>> from kwimage.transform import * # NOQA >>> self = Affine.random() >>> params = self.decompose() >>> recon = Affine.coerce(**params) >>> params2 = recon.decompose() >>> pt = np.vstack([np.random.rand(2, 1), [1]]) >>> result1 = self.matrix[0:2] @ pt >>> result2 = recon.matrix[0:2] @ pt >>> assert np.allclose(result1, result2)

>>> self = Affine.scale(0.001) @ Affine.random() >>> params = self.decompose() >>> self.det()

Example

>>> # xdoctest: +REQUIRES(module:pandas) >>> from kwimage.transform import * # NOQA >>> import kwimage >>> import pandas as pd >>> # Test consistency of decompose + reconstruct >>> param_grid = list(ub.named_product({ >>> 'theta': np.linspace(-4 * np.pi, 4 * np.pi, 3), >>> 'shearx': np.linspace(- 10 * np.pi, 10 * np.pi, 4), >>> })) >>> def normalize_angle(radian): >>> return np.arctan2(np.sin(radian), np.cos(radian)) >>> for pextra in param_grid: >>> params0 = dict(scale=(3.05, 3.07), offset=(10.5, 12.1), **pextra) >>> self = recon0 = kwimage.Affine.affine(**params0) >>> self.decompose() >>> # Test drift with multiple decompose / reconstructions >>> params_list = [params0] >>> recon_list = [recon0] >>> n = 4 >>> for _ in range(n): >>> prev = recon_list[-1] >>> params = prev.decompose() >>> recon = kwimage.Affine.coerce(**params) >>> params_list.append(params) >>> recon_list.append(recon) >>> params_df = pd.DataFrame(params_list) >>> #print('params_list = {}'.format(ub.repr2(params_list, nl=1, precision=5))) >>> print(params_df) >>> assert ub.allsame(normalize_angle(params_df['theta']), eq=np.isclose) >>> assert ub.allsame(params_df['shearx'], eq=np.allclose) >>> assert ub.allsame(params_df['scale'], eq=np.allclose) >>> assert ub.allsame(params_df['offset'], eq=np.allclose)

- classmethod affine(scale=None, offset=None, theta=None, shear=None, about=None, shearx=None, array_cls=None, math_mod=None, **kwargs)[source]¶

Create an affine matrix from high-level parameters

- Parameters

scale (float | Tuple[float, float]) – x, y scale factor

offset (float | Tuple[float, float]) – x, y translation factor

theta (float) – counter-clockwise rotation angle in radians

shearx (float) – shear factor parallel to the x-axis.

about (float | Tuple[float, float]) – x, y location of the origin

shear (float) – BROKEN, dont use. counter-clockwise shear angle in radians

Todo

- [ ] Add aliases? -

origin : alias for about rotation : alias for theta translation : alias for offset

- Returns

the constructed Affine object

- Return type

Example

>>> from kwimage.transform import * # NOQA >>> rng = kwarray.ensure_rng(None) >>> scale = rng.randn(2) * 10 >>> offset = rng.randn(2) * 10 >>> about = rng.randn(2) * 10 >>> theta = rng.randn() * 10 >>> shearx = rng.randn() * 10 >>> # Create combined matrix from all params >>> F = Affine.affine( >>> scale=scale, offset=offset, theta=theta, shearx=shearx, >>> about=about) >>> # Test that combining components matches >>> S = Affine.affine(scale=scale) >>> T = Affine.affine(offset=offset) >>> R = Affine.affine(theta=theta) >>> E = Affine.affine(shearx=shearx) >>> O = Affine.affine(offset=about) >>> # combine (note shear must be on the RHS of rotation) >>> alt = O @ T @ R @ E @ S @ O.inv() >>> print('F = {}'.format(ub.repr2(F.matrix.tolist(), nl=1))) >>> print('alt = {}'.format(ub.repr2(alt.matrix.tolist(), nl=1))) >>> assert np.all(np.isclose(alt.matrix, F.matrix)) >>> pt = np.vstack([np.random.rand(2, 1), [[1]]]) >>> warp_pt1 = (F.matrix @ pt) >>> warp_pt2 = (alt.matrix @ pt) >>> assert np.allclose(warp_pt2, warp_pt1)

Sympy

>>> # xdoctest: +SKIP >>> import sympy >>> # Shows the symbolic construction of the code >>> # https://groups.google.com/forum/#!topic/sympy/k1HnZK_bNNA >>> from sympy.abc import theta >>> params = x0, y0, sx, sy, theta, shearx, tx, ty = sympy.symbols( >>> 'x0, y0, sx, sy, theta, shearx, tx, ty') >>> # move the center to 0, 0 >>> tr1_ = np.array([[1, 0, -x0], >>> [0, 1, -y0], >>> [0, 0, 1]]) >>> # Define core components of the affine transform >>> S = np.array([ # scale >>> [sx, 0, 0], >>> [ 0, sy, 0], >>> [ 0, 0, 1]]) >>> E = np.array([ # x-shear >>> [1, shearx, 0], >>> [0, 1, 0], >>> [0, 0, 1]]) >>> R = np.array([ # rotation >>> [sympy.cos(theta), -sympy.sin(theta), 0], >>> [sympy.sin(theta), sympy.cos(theta), 0], >>> [ 0, 0, 1]]) >>> T = np.array([ # translation >>> [ 1, 0, tx], >>> [ 0, 1, ty], >>> [ 0, 0, 1]]) >>> # Contruct the affine 3x3 about the origin >>> aff0 = np.array(sympy.simplify(T @ R @ E @ S)) >>> # move 0, 0 back to the specified origin >>> tr2_ = np.array([[1, 0, x0], >>> [0, 1, y0], >>> [0, 0, 1]]) >>> # combine transformations >>> aff = tr2_ @ aff0 @ tr1_ >>> print('aff = {}'.format(ub.repr2(aff.tolist(), nl=1)))

- classmethod fit(pts1, pts2)[source]¶

Fit an affine transformation between a set of corresponding points

- Parameters

pts1 (ndarray) – An Nx2 array of points in “space 1”.

pts2 (ndarray) – A corresponding Nx2 array of points in “space 2”

- Returns

a transform that warps from “space1” to “space2”.

- Return type

Note

An affine matrix has 6 degrees of freedome, so at least 6 point pairs are needed.

References

https://www.cs.ubc.ca/~lowe/papers/ijcv04.pdf page 22

Example

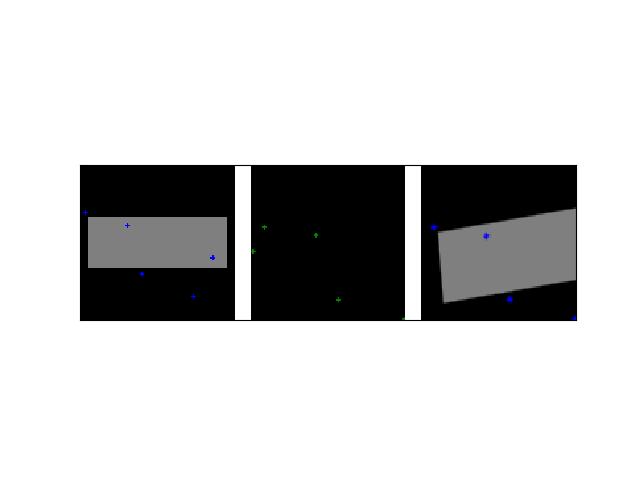

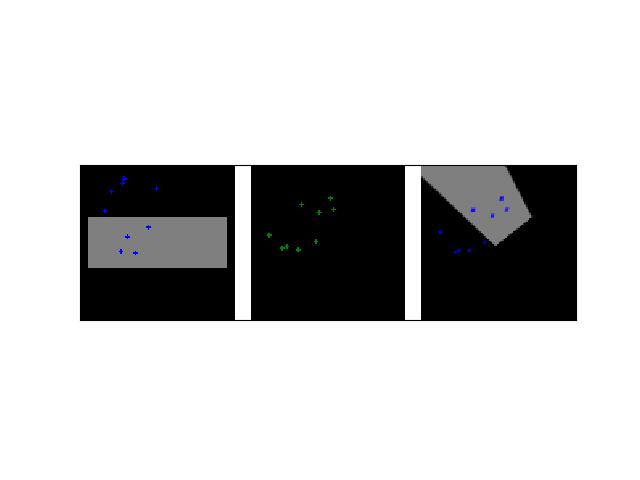

>>> # Create a set of points, warp them, then recover the warp >>> import kwimage >>> points = kwimage.Points.random(6).scale(64) >>> #A1 = kwimage.Affine.affine(scale=0.9, theta=-3.2, offset=(2, 3), about=(32, 32), skew=2.3) >>> #A2 = kwimage.Affine.affine(scale=0.8, theta=0.8, offset=(2, 0), about=(32, 32)) >>> A1 = kwimage.Affine.random() >>> A2 = kwimage.Affine.random() >>> A12_real = A2 @ A1.inv() >>> points1 = points.warp(A1) >>> points2 = points.warp(A2) >>> # Recover the warp >>> pts1, pts2 = points1.xy, points2.xy >>> A_recovered = kwimage.Affine.fit(pts1, pts2) >>> assert np.all(np.isclose(A_recovered.matrix, A12_real.matrix)) >>> # xdoctest: +REQUIRES(--show) >>> import kwplot >>> kwplot.autompl() >>> base1 = np.zeros((96, 96, 3)) >>> base1[32:-32, 5:-5] = 0.5 >>> base2 = np.zeros((96, 96, 3)) >>> img1 = points1.draw_on(base1, radius=3, color='blue') >>> img2 = points2.draw_on(base2, radius=3, color='green') >>> img1_warp = kwimage.warp_affine(img1, A_recovered) >>> canvas = kwimage.stack_images([img1, img2, img1_warp], pad=10, axis=1, bg_value=(1., 1., 1.)) >>> kwplot.imshow(canvas)

- class kwimage.Boxes(data, format=None, check=True)[source]¶

Bases:

_BoxConversionMixins,_BoxPropertyMixins,_BoxTransformMixins,_BoxDrawMixins,NiceReprConverts boxes between different formats as long as the last dimension contains 4 coordinates and the format is specified.

This is a convinience class, and should not not store the data for very long. The general idiom should be create class, convert data, and then get the raw data and let the class be garbage collected. This will help ensure that your code is portable and understandable if this class is not available.

Example

>>> # xdoctest: +IGNORE_WHITESPACE >>> import kwimage >>> import numpy as np >>> # Given an array / tensor that represents one or more boxes >>> data = np.array([[ 0, 0, 10, 10], >>> [ 5, 5, 50, 50], >>> [20, 0, 30, 10]]) >>> # The kwimage.Boxes data structure is a thin fast wrapper >>> # that provides methods for operating on the boxes. >>> # It requires that the user explicitly provide a code that denotes >>> # the format of the boxes (i.e. what each column represents) >>> boxes = kwimage.Boxes(data, 'ltrb') >>> # This means that there is no ambiguity about box format >>> # The representation string of the Boxes object demonstrates this >>> print('boxes = {!r}'.format(boxes)) boxes = <Boxes(ltrb, array([[ 0, 0, 10, 10], [ 5, 5, 50, 50], [20, 0, 30, 10]]))> >>> # if you pass this data around. You can convert to other formats >>> # For docs on available format codes see :class:`BoxFormat`. >>> # In this example we will convert (left, top, right, bottom) >>> # to (left-x, top-y, width, height). >>> boxes.toformat('xywh') <Boxes(xywh, array([[ 0, 0, 10, 10], [ 5, 5, 45, 45], [20, 0, 10, 10]]))> >>> # In addition to format conversion there are other operations >>> # We can quickly (using a C-backend) find IoUs >>> ious = boxes.ious(boxes) >>> print('{}'.format(ub.repr2(ious, nl=1, precision=2, with_dtype=False))) np.array([[1. , 0.01, 0. ], [0.01, 1. , 0.02], [0. , 0.02, 1. ]]) >>> # We can ask for the area of each box >>> print('boxes.area = {}'.format(ub.repr2(boxes.area, nl=0, with_dtype=False))) boxes.area = np.array([[ 100],[2025],[ 100]]) >>> # We can ask for the center of each box >>> print('boxes.center = {}'.format(ub.repr2(boxes.center, nl=1, with_dtype=False))) boxes.center = ( np.array([[ 5. ],[27.5],[25. ]]), np.array([[ 5. ],[27.5],[ 5. ]]), ) >>> # We can translate / scale the boxes >>> boxes.translate((10, 10)).scale(100) <Boxes(ltrb, array([[1000., 1000., 2000., 2000.], [1500., 1500., 6000., 6000.], [3000., 1000., 4000., 2000.]]))> >>> # We can clip the bounding boxes >>> boxes.translate((10, 10)).scale(100).clip(1200, 1200, 1700, 1800) <Boxes(ltrb, array([[1200., 1200., 1700., 1800.], [1500., 1500., 1700., 1800.], [1700., 1200., 1700., 1800.]]))> >>> # We can perform arbitrary warping of the boxes >>> # (note that if the transform is not axis aligned, the axis aligned >>> # bounding box of the transform result will be returned) >>> transform = np.array([[-0.83907153, 0.54402111, 0. ], >>> [-0.54402111, -0.83907153, 0. ], >>> [ 0. , 0. , 1. ]]) >>> boxes.warp(transform) <Boxes(ltrb, array([[ -8.3907153 , -13.8309264 , 5.4402111 , 0. ], [-39.23347095, -69.154632 , 23.00569785, -6.9154632 ], [-25.1721459 , -24.7113486 , -11.3412195 , -10.8804222 ]]))> >>> # Note, that we can transform the box to a Polygon for more >>> # accurate warping. >>> transform = np.array([[-0.83907153, 0.54402111, 0. ], >>> [-0.54402111, -0.83907153, 0. ], >>> [ 0. , 0. , 1. ]]) >>> warped_polys = boxes.to_polygons().warp(transform) >>> print(ub.repr2(warped_polys.data, sv=1)) [ <Polygon({ 'exterior': <Coords(data= array([[ 0. , 0. ], [ 5.4402111, -8.3907153], [ -2.9505042, -13.8309264], [ -8.3907153, -5.4402111], [ 0. , 0. ]]))>, 'interiors': [], })>, <Polygon({ 'exterior': <Coords(data= array([[ -1.4752521 , -6.9154632 ], [ 23.00569785, -44.67368205], [-14.752521 , -69.154632 ], [-39.23347095, -31.39641315], [ -1.4752521 , -6.9154632 ]]))>, 'interiors': [], })>, <Polygon({ 'exterior': <Coords(data= array([[-16.7814306, -10.8804222], [-11.3412195, -19.2711375], [-19.7319348, -24.7113486], [-25.1721459, -16.3206333], [-16.7814306, -10.8804222]]))>, 'interiors': [], })>, ] >>> # The kwimage.Boxes data structure is also convertable to >>> # several alternative data structures, like shapely, coco, and imgaug. >>> print(ub.repr2(boxes.to_shapely(), sv=1)) [ POLYGON ((0 0, 0 10, 10 10, 10 0, 0 0)), POLYGON ((5 5, 5 50, 50 50, 50 5, 5 5)), POLYGON ((20 0, 20 10, 30 10, 30 0, 20 0)), ] >>> # xdoctest: +REQUIRES(module:imgaug) >>> print(ub.repr2(boxes[0:1].to_imgaug(shape=(100, 100)), sv=1)) BoundingBoxesOnImage([BoundingBox(x1=0.0000, y1=0.0000, x2=10.0000, y2=10.0000, label=None)], shape=(100, 100)) >>> # xdoctest: -REQUIRES(module:imgaug) >>> print(ub.repr2(list(boxes.to_coco()), sv=1)) [ [0, 0, 10, 10], [5, 5, 45, 45], [20, 0, 10, 10], ] >>> # Finally, when you are done with your boxes object, you can >>> # unwrap the raw data by using the ``.data`` attribute >>> # all operations are done on this data, which gives the >>> # kwiamge.Boxes data structure almost no overhead when >>> # inserted into existing code. >>> print('boxes.data =\n{}'.format(ub.repr2(boxes.data, nl=1))) boxes.data = np.array([[ 0, 0, 10, 10], [ 5, 5, 50, 50], [20, 0, 30, 10]], dtype=np.int64) >>> # xdoctest: +REQUIRES(module:torch) >>> # This data structure was designed for use with both torch >>> # and numpy, the underlying data can be either an array or tensor. >>> boxes.tensor() <Boxes(ltrb, tensor([[ 0, 0, 10, 10], [ 5, 5, 50, 50], [20, 0, 30, 10]]))> >>> boxes.numpy() <Boxes(ltrb, array([[ 0, 0, 10, 10], [ 5, 5, 50, 50], [20, 0, 30, 10]]))>

Example

>>> # xdoctest: +IGNORE_WHITESPACE >>> from kwimage.structs.boxes import * # NOQA >>> # Demo of conversion methods >>> import kwimage >>> kwimage.Boxes([[25, 30, 15, 10]], 'xywh') <Boxes(xywh, array([[25, 30, 15, 10]]))> >>> kwimage.Boxes([[25, 30, 15, 10]], 'xywh').to_xywh() <Boxes(xywh, array([[25, 30, 15, 10]]))> >>> kwimage.Boxes([[25, 30, 15, 10]], 'xywh').to_cxywh() <Boxes(cxywh, array([[32.5, 35. , 15. , 10. ]]))> >>> kwimage.Boxes([[25, 30, 15, 10]], 'xywh').to_ltrb() <Boxes(ltrb, array([[25, 30, 40, 40]]))> >>> kwimage.Boxes([[25, 30, 15, 10]], 'xywh').scale(2).to_ltrb() <Boxes(ltrb, array([[50., 60., 80., 80.]]))> >>> # xdoctest: +REQUIRES(module:torch) >>> kwimage.Boxes(torch.FloatTensor([[25, 30, 15, 20]]), 'xywh').scale(.1).to_ltrb() <Boxes(ltrb, tensor([[ 2.5000, 3.0000, 4.0000, 5.0000]]))>

Note

In the following examples we show cases where

Boxescan hold a single 1-dimensional box array. This is a holdover from an older codebase, and some functions may assume that the input is at least 2-D. Thus when representing a single bounding box it is best practice to view it as a list of 1 box. While many function will work in the 1-D case, not all functions have been tested and thus we cannot gaurentee correctness.Example

>>> # xdoctest: +IGNORE_WHITESPACE >>> Boxes([25, 30, 15, 10], 'xywh') <Boxes(xywh, array([25, 30, 15, 10]))> >>> Boxes([25, 30, 15, 10], 'xywh').to_xywh() <Boxes(xywh, array([25, 30, 15, 10]))> >>> Boxes([25, 30, 15, 10], 'xywh').to_cxywh() <Boxes(cxywh, array([32.5, 35. , 15. , 10. ]))> >>> Boxes([25, 30, 15, 10], 'xywh').to_ltrb() <Boxes(ltrb, array([25, 30, 40, 40]))> >>> Boxes([25, 30, 15, 10], 'xywh').scale(2).to_ltrb() <Boxes(ltrb, array([50., 60., 80., 80.]))> >>> # xdoctest: +REQUIRES(module:torch) >>> Boxes(torch.FloatTensor([[25, 30, 15, 20]]), 'xywh').scale(.1).to_ltrb() <Boxes(ltrb, tensor([[ 2.5000, 3.0000, 4.0000, 5.0000]]))>

Example

>>> datas = [ >>> [1, 2, 3, 4], >>> [[1, 2, 3, 4], [4, 5, 6, 7]], >>> [[[1, 2, 3, 4], [4, 5, 6, 7]]], >>> ] >>> formats = BoxFormat.cannonical >>> for format1 in formats: >>> for data in datas: >>> self = box1 = Boxes(data, format1) >>> for format2 in formats: >>> box2 = box1.toformat(format2) >>> back = box2.toformat(format1) >>> assert box1 == back

- classmethod random(num=1, scale=1.0, format='xywh', anchors=None, anchor_std=0.16666666666666666, tensor=False, rng=None)[source]¶

Makes random boxes; typically for testing purposes

- Parameters

num (int) – number of boxes to generate

scale (float | Tuple[float, float]) – size of imgdims

format (str) – format of boxes to be created (e.g. ltrb, xywh)

anchors (ndarray) – normalized width / heights of anchor boxes to perterb and randomly place. (must be in range 0-1)

anchor_std (float) – magnitude of noise applied to anchor shapes

tensor (bool) – if True, returns boxes in tensor format

rng (None | int | RandomState) – initial random seed

- Returns

random boxes

- Return type

Example

>>> # xdoctest: +IGNORE_WHITESPACE >>> Boxes.random(3, rng=0, scale=100) <Boxes(xywh, array([[54, 54, 6, 17], [42, 64, 1, 25], [79, 38, 17, 14]]))> >>> # xdoctest: +REQUIRES(module:torch) >>> Boxes.random(3, rng=0, scale=100).tensor() <Boxes(xywh, tensor([[ 54, 54, 6, 17], [ 42, 64, 1, 25], [ 79, 38, 17, 14]]))> >>> anchors = np.array([[.5, .5], [.3, .3]]) >>> Boxes.random(3, rng=0, scale=100, anchors=anchors) <Boxes(xywh, array([[ 2, 13, 51, 51], [32, 51, 32, 36], [36, 28, 23, 26]]))>

Example

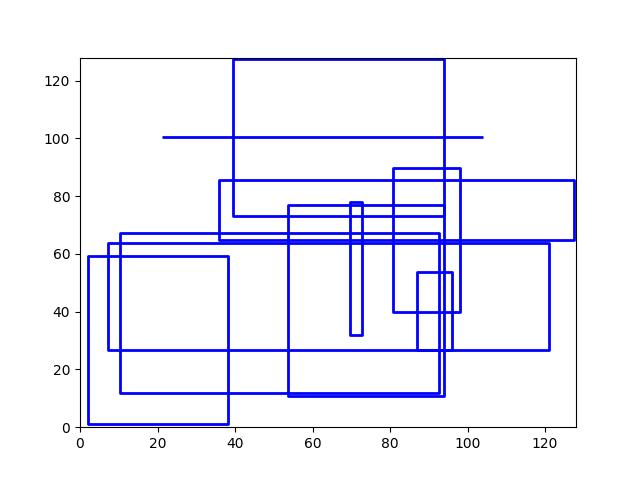

>>> # Boxes position/shape within 0-1 space should be uniform. >>> # xdoctest: +REQUIRES(--show) >>> import kwplot >>> kwplot.autompl() >>> fig = kwplot.figure(fnum=1, doclf=True) >>> fig.gca().set_xlim(0, 128) >>> fig.gca().set_ylim(0, 128) >>> import kwimage >>> kwimage.Boxes.random(num=10).scale(128).draw()

- classmethod concatenate(boxes, axis=0)[source]¶

Concatenates multiple boxes together

- Parameters

boxes (Sequence[Boxes]) – list of boxes to concatenate

axis (int) – axis to stack on. Defaults to 0.

- Returns

stacked boxes

- Return type

Example

>>> boxes = [Boxes.random(3) for _ in range(3)] >>> new = Boxes.concatenate(boxes) >>> assert len(new) == 9 >>> assert np.all(new.data[3:6] == boxes[1].data)

Example

>>> boxes = [Boxes.random(3) for _ in range(3)] >>> boxes[0].data = boxes[0].data[0] >>> boxes[1].data = boxes[0].data[0:0] >>> new = Boxes.concatenate(boxes) >>> assert len(new) == 4 >>> # xdoctest: +REQUIRES(module:torch) >>> new = Boxes.concatenate([b.tensor() for b in boxes]) >>> assert len(new) == 4

- compress(flags, axis=0, inplace=False)[source]¶

Filters boxes based on a boolean criterion

- Parameters

flags (ArrayLike) – true for items to be kept. Extended type: ArrayLike[bool]

axis (int) – you usually want this to be 0

inplace (bool) – if True, modifies this object

- Returns

the boxes corresponding to where flags were true

- Return type

Example

>>> self = Boxes([[25, 30, 15, 10]], 'ltrb') >>> self.compress([True]) <Boxes(ltrb, array([[25, 30, 15, 10]]))> >>> self.compress([False]) <Boxes(ltrb, array([], shape=(0, 4), dtype=int64))>

- take(idxs, axis=0, inplace=False)[source]¶

Takes a subset of items at specific indices

- Parameters

indices (ArrayLike) – Indexes of items to take. Extended type ArrayLike[int].

axis (int) – you usually want this to be 0

inplace (bool) – if True, modifies this object

- Returns

the boxes corresponding to the specified indices

- Return type

Example

>>> self = Boxes([[25, 30, 15, 10]], 'ltrb') >>> self.take([0]) <Boxes(ltrb, array([[25, 30, 15, 10]]))> >>> self.take([]) <Boxes(ltrb, array([], shape=(0, 4), dtype=int64))>

- is_tensor()[source]¶

is the backend fueled by torch?

- Returns

True if the Boxes are torch tensors

- Return type

- is_numpy()[source]¶

is the backend fueled by numpy?

- Returns

True if the Boxes are numpy arrays

- Return type

- property device¶

If the backend is torch returns the data device, otherwise None

- astype(dtype)[source]¶

Changes the type of the internal array used to represent the boxes

Note

this operation is not inplace

- Returns

the boxes with the chosen type

- Return type

Example

>>> # xdoctest: +IGNORE_WHITESPACE >>> # xdoctest: +REQUIRES(module:torch) >>> Boxes.random(3, 100, rng=0).tensor().astype('int32') <Boxes(xywh, tensor([[54, 54, 6, 17], [42, 64, 1, 25], [79, 38, 17, 14]], dtype=torch.int32))> >>> Boxes.random(3, 100, rng=0).numpy().astype('int32') <Boxes(xywh, array([[54, 54, 6, 17], [42, 64, 1, 25], [79, 38, 17, 14]], dtype=int32))> >>> Boxes.random(3, 100, rng=0).tensor().astype('float32') >>> Boxes.random(3, 100, rng=0).numpy().astype('float32')

- round(inplace=False)[source]¶

Rounds data coordinates to the nearest integer.

This operation is applied directly to the box coordinates, so its output will depend on the format the boxes are stored in.

- Parameters

inplace (bool) – if True, modifies this object. Defaults to False.

- Returns

the boxes with rounded coordinates

- Return type

- SeeAlso:

Example

>>> import kwimage >>> self = kwimage.Boxes.random(3, rng=0).scale(10) >>> new = self.round() >>> print('self = {!r}'.format(self)) >>> print('new = {!r}'.format(new)) self = <Boxes(xywh, array([[5.48813522, 5.44883192, 0.53949833, 1.70306146], [4.23654795, 6.4589411 , 0.13932407, 2.45878875], [7.91725039, 3.83441508, 1.71937704, 1.45453393]]))> new = <Boxes(xywh, array([[5., 5., 1., 2.], [4., 6., 0., 2.], [8., 4., 2., 1.]]))>

- quantize(inplace=False, dtype=<class 'numpy.int32'>)[source]¶

Converts the box to integer coordinates.

This operation takes the floor of the left side and the ceil of the right side. Thus the area of the box will never decreases. But this will often increase the width / height of the box by a pixel.

- Parameters

inplace (bool) – if True, modifies this object

dtype (type) – type to cast as

- Returns

the boxes with quantized coordinates

- Return type

- SeeAlso:

Boxes.round()Boxes.resize()if you need to ensure the size does not change

Example

>>> import kwimage >>> self = kwimage.Boxes.random(3, rng=0).scale(10) >>> new = self.quantize() >>> print('self = {!r}'.format(self)) >>> print('new = {!r}'.format(new)) self = <Boxes(xywh, array([[5.48813522, 5.44883192, 0.53949833, 1.70306146], [4.23654795, 6.4589411 , 0.13932407, 2.45878875], [7.91725039, 3.83441508, 1.71937704, 1.45453393]]))> new = <Boxes(xywh, array([[5, 5, 2, 3], [4, 6, 1, 3], [7, 3, 3, 3]], dtype=int32))>

Example

>>> import kwimage >>> # Be careful if it is important to preserve the width/height >>> self = kwimage.Boxes([[0, 0, 10, 10]], 'xywh') >>> aff = kwimage.Affine.coerce(offset=(0.5, 0.0)) >>> warped = self.warp(aff) >>> new = warped.quantize(dtype=int) >>> print('self = {!r}'.format(self)) >>> print('warped = {!r}'.format(warped)) >>> print('new = {!r}'.format(new)) self = <Boxes(xywh, array([[ 0, 0, 10, 10]]))> warped = <Boxes(xywh, array([[ 0.5, 0. , 10. , 10. ]]))> new = <Boxes(xywh, array([[ 0, 0, 11, 10]]))>

Example

>>> import kwimage >>> self = kwimage.Boxes.random(3, rng=0) >>> orig = self.copy() >>> self.quantize(inplace=True) >>> assert np.any(self.data != orig.data)

- numpy()[source]¶

Converts tensors to numpy. Does not change memory if possible.

- Returns

the boxes with a numpy backend

- Return type

Example

>>> # xdoctest: +REQUIRES(module:torch) >>> self = Boxes.random(3).tensor() >>> newself = self.numpy() >>> self.data[0, 0] = 0 >>> assert newself.data[0, 0] == 0 >>> self.data[0, 0] = 1 >>> assert self.data[0, 0] == 1

- tensor(device=NoParam)[source]¶

Converts numpy to tensors. Does not change memory if possible.

- Parameters

device (int | None | torch.device) – The torch device to put the backend tensors on

- Returns

the boxes with a torch backend

- Return type

Example

>>> # xdoctest: +REQUIRES(module:torch) >>> self = Boxes.random(3) >>> # xdoctest: +REQUIRES(module:torch) >>> newself = self.tensor() >>> self.data[0, 0] = 0 >>> assert newself.data[0, 0] == 0 >>> self.data[0, 0] = 1 >>> assert self.data[0, 0] == 1

- ious(other, bias=0, impl='auto', mode=None)[source]¶

Intersection over union.

Compute IOUs (intersection area over union area) between these boxes and another set of boxes. This is a symmetric measure of similarity between boxes.

Todo

- [ ] Add pairwise flag to toggle between one-vs-one and all-vs-all

computation. I.E. Add option for componentwise calculation.

- Parameters

other (Boxes) – boxes to compare IoUs against

bias (int) – either 0 or 1, does TL=BR have area of 0 or 1? Defaults to 0.

impl (str) – code to specify implementation used to ious. Can be either torch, py, c, or auto. Efficiency and the exact result will vary by implementation, but they will always be close. Some implementations only accept certain data types (e.g. impl=’c’, only accepts float32 numpy arrays). See ~/code/kwimage/dev/bench_bbox.py for benchmark details. On my system the torch impl was fastest (when the data was on the GPU). Defaults to ‘auto’

mode (str) – depricated, use impl

- Returns

the ious

- Return type

ndarray

- SeeAlso:

iooas - for a measure of coverage between boxes

Examples

>>> import kwimage >>> self = kwimage.Boxes(np.array([[ 0, 0, 10, 10], >>> [10, 0, 20, 10], >>> [20, 0, 30, 10]]), 'ltrb') >>> other = kwimage.Boxes(np.array([6, 2, 20, 10]), 'ltrb') >>> overlaps = self.ious(other, bias=1).round(2) >>> assert np.all(np.isclose(overlaps, [0.21, 0.63, 0.04])), repr(overlaps)

Examples

>>> import kwimage >>> boxes1 = kwimage.Boxes(np.array([[ 0, 0, 10, 10], >>> [10, 0, 20, 10], >>> [20, 0, 30, 10]]), 'ltrb') >>> other = kwimage.Boxes(np.array([[6, 2, 20, 10], >>> [100, 200, 300, 300]]), 'ltrb') >>> overlaps = boxes1.ious(other) >>> print('{}'.format(ub.repr2(overlaps, precision=2, nl=1))) np.array([[0.18, 0. ], [0.61, 0. ], [0. , 0. ]]...)

Examples

>>> # xdoctest: +IGNORE_WHITESPACE >>> Boxes(np.empty(0), 'xywh').ious(Boxes(np.empty(4), 'xywh')).shape (0,) >>> #Boxes(np.empty(4), 'xywh').ious(Boxes(np.empty(0), 'xywh')).shape >>> Boxes(np.empty((0, 4)), 'xywh').ious(Boxes(np.empty((0, 4)), 'xywh')).shape (0, 0) >>> Boxes(np.empty((1, 4)), 'xywh').ious(Boxes(np.empty((0, 4)), 'xywh')).shape (1, 0) >>> Boxes(np.empty((0, 4)), 'xywh').ious(Boxes(np.empty((1, 4)), 'xywh')).shape (0, 1)

Examples

>>> # xdoctest: +REQUIRES(module:torch) >>> formats = BoxFormat.cannonical >>> istensors = [False, True] >>> results = {} >>> for format in formats: >>> for tensor in istensors: >>> boxes1 = Boxes.random(5, scale=10.0, rng=0, format=format, tensor=tensor) >>> boxes2 = Boxes.random(7, scale=10.0, rng=1, format=format, tensor=tensor) >>> ious = boxes1.ious(boxes2) >>> results[(format, tensor)] = ious >>> results = {k: v.numpy() if torch.is_tensor(v) else v for k, v in results.items() } >>> results = {k: v.tolist() for k, v in results.items()} >>> print(ub.repr2(results, sk=True, precision=3, nl=2)) >>> from functools import partial >>> assert ub.allsame(results.values(), partial(np.allclose, atol=1e-07))

- iooas(other, bias=0)[source]¶

Intersection over other area.

This is an asymetric measure of coverage. How much of the “other” boxes are covered by these boxes. It is the area of intersection between each pair of boxes and the area of the “other” boxes.

- SeeAlso:

ious - for a measure of similarity between boxes

- Parameters

other (Boxes) – boxes to compare IoOA against

bias (int) – either 0 or 1, does TL=BR have area of 0 or 1? Defaults to 0.

- Returns

the iooas

- Return type

ndarray

Examples

>>> self = Boxes(np.array([[ 0, 0, 10, 10], >>> [10, 0, 20, 10], >>> [20, 0, 30, 10]]), 'ltrb') >>> other = Boxes(np.array([[6, 2, 20, 10], [0, 0, 0, 3]]), 'xywh') >>> coverage = self.iooas(other, bias=0).round(2) >>> print('coverage = {!r}'.format(coverage))

- isect_area(other, bias=0)[source]¶

Intersection part of intersection over union computation

- Parameters

other (Boxes) – boxes to compare IoOA against

bias (int) – either 0 or 1, does TL=BR have area of 0 or 1? Defaults to 0.

- Returns

the iooas

- Return type

ndarray

Examples

>>> # xdoctest: +IGNORE_WHITESPACE >>> self = Boxes.random(5, scale=10.0, rng=0, format='ltrb') >>> other = Boxes.random(3, scale=10.0, rng=1, format='ltrb') >>> isect = self.isect_area(other, bias=0) >>> ious_v1 = isect / ((self.area + other.area.T) - isect) >>> ious_v2 = self.ious(other, bias=0) >>> assert np.allclose(ious_v1, ious_v2)

- intersection(other)[source]¶

Componentwise intersection between two sets of Boxes

intersections of boxes are always boxes, so this works

- Parameters

other (Boxes) – boxes to intersect with this object. (must be of same length)

- Returns

the intersection geometry

- Return type

Examples

>>> # xdoctest: +IGNORE_WHITESPACE >>> from kwimage.structs.boxes import * # NOQA >>> self = Boxes.random(5, rng=0).scale(10.) >>> other = self.translate(1) >>> new = self.intersection(other) >>> new_area = np.nan_to_num(new.area).ravel() >>> alt_area = np.diag(self.isect_area(other)) >>> close = np.isclose(new_area, alt_area) >>> assert np.all(close)

- union_hull(other)[source]¶

Componentwise hull union between two sets of Boxes

NOTE: convert to polygon to do a real union.

- Parameters

other (Boxes) – boxes to union with this object. (must be of same length)

- Returns

unioned boxes

- Return type

Examples

>>> # xdoctest: +IGNORE_WHITESPACE >>> from kwimage.structs.boxes import * # NOQA >>> self = Boxes.random(5, rng=0).scale(10.) >>> other = self.translate(1) >>> new = self.union_hull(other) >>> new_area = np.nan_to_num(new.area).ravel()

- bounding_box()[source]¶

Returns the box that bounds all of the contained boxes

- Returns

a single box

- Return type

Examples

>>> # xdoctest: +IGNORE_WHITESPACE >>> from kwimage.structs.boxes import * # NOQA >>> self = Boxes.random(5, rng=0).scale(10.) >>> other = self.translate(1) >>> new = self.union_hull(other) >>> new_area = np.nan_to_num(new.area).ravel()

- contains(other)[source]¶

Determine of points are completely contained by these boxes

- Parameters

other (kwimage.Points) – points to test for containment. TODO: support generic data types

- Returns

- flags - N x M boolean matrix indicating which box

contains which points, where N is the number of boxes and M is the number of points.

- Return type

ArrayLike

Examples

>>> import kwimage >>> self = kwimage.Boxes.random(10).scale(10).round() >>> other = kwimage.Points.random(10).scale(10).round() >>> flags = self.contains(other) >>> flags = self.contains(self.xy_center) >>> assert np.all(np.diag(flags))

- view(*shape)[source]¶

Passthrough method to view or reshape

- Parameters

*shape (Tuple[int, …]) – new shape

- Returns

data with a different view

- Return type

Example

>>> # xdoctest: +REQUIRES(module:torch) >>> self = Boxes.random(6, scale=10.0, rng=0, format='xywh').tensor() >>> assert list(self.view(3, 2, 4).data.shape) == [3, 2, 4] >>> self = Boxes.random(6, scale=10.0, rng=0, format='ltrb').tensor() >>> assert list(self.view(3, 2, 4).data.shape) == [3, 2, 4]

- class kwimage.Color(color, alpha=None, space=None)[source]¶

Bases:

NiceReprUsed for converting a single color between spaces and encodings. This should only be used when handling small numbers of colors(e.g. 1), don’t use this to represent an image.

- Parameters

space (str) – colorspace of wrapped color. Assume RGB if not specified and it cannot be inferred

CommandLine

xdoctest -m ~/code/kwimage/kwimage/im_color.py Color

Example

>>> print(Color('g')) >>> print(Color('orangered')) >>> print(Color('#AAAAAA').as255()) >>> print(Color([0, 255, 0])) >>> print(Color([1, 1, 1.])) >>> print(Color([1, 1, 1])) >>> print(Color(Color([1, 1, 1])).as255()) >>> print(Color(Color([1., 0, 1, 0])).ashex()) >>> print(Color([1, 1, 1], alpha=255)) >>> print(Color([1, 1, 1], alpha=255, space='lab'))

- forimage(image, space='auto')[source]¶

Return a numeric value for this color that can be used in the given image.

Create a numeric color tuple that agrees with the format of the input image (i.e. float or int, with 3 or 4 channels).

- Parameters

image (ndarray) – image to return color for

space (str) – colorspace of the input image. Defaults to ‘auto’, which will choose rgb or rgba

- Returns

the color value

- Return type

Tuple[Number, …]

Example

>>> import kwimage >>> img_f3 = np.zeros([8, 8, 3], dtype=np.float32) >>> img_u3 = np.zeros([8, 8, 3], dtype=np.uint8) >>> img_f4 = np.zeros([8, 8, 4], dtype=np.float32) >>> img_u4 = np.zeros([8, 8, 4], dtype=np.uint8) >>> kwimage.Color('red').forimage(img_f3) (1.0, 0.0, 0.0) >>> kwimage.Color('red').forimage(img_f4) (1.0, 0.0, 0.0, 1.0) >>> kwimage.Color('red').forimage(img_u3) (255, 0, 0) >>> kwimage.Color('red').forimage(img_u4) (255, 0, 0, 255) >>> kwimage.Color('red', alpha=0.5).forimage(img_f4) (1.0, 0.0, 0.0, 0.5) >>> kwimage.Color('red', alpha=0.5).forimage(img_u4) (255, 0, 0, 127) >>> kwimage.Color('red').forimage(np.uint8) (255, 0, 0)

- ashex(space=None)[source]¶

Convert to hex values

- Parameters

space (None | str) – if specified convert to this colorspace before returning

- Returns

the hex representation

- Return type

- classmethod named_colors()[source]¶

- Returns

names of colors that Color accepts

- Return type

List[str]

Example

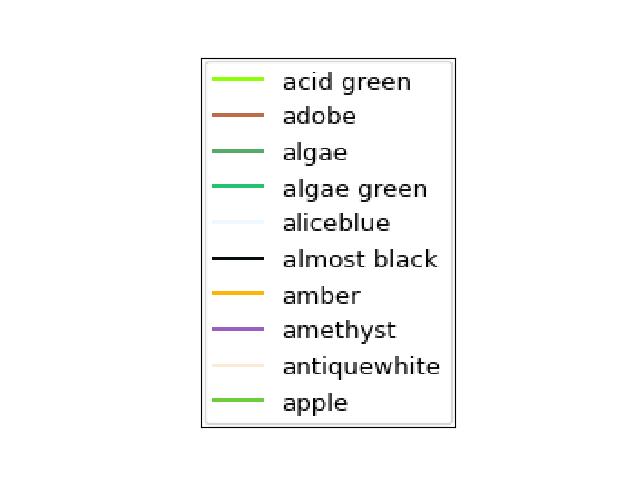

>>> import kwimage >>> named_colors = kwimage.Color.named_colors() >>> color_lut = {name: kwimage.Color(name).as01() for name in named_colors} >>> # xdoctest: +REQUIRES(module:kwplot) >>> # xdoctest: +REQUIRES(--show) >>> import kwplot >>> kwplot.autompl() >>> # This is a very big table if we let it be, reduce it >>> color_lut =dict(list(color_lut.items())[0:10]) >>> canvas = kwplot.make_legend_img(color_lut) >>> kwplot.imshow(canvas)

- classmethod distinct(num, existing=None, space='rgb', legacy='auto', exclude_black=True, exclude_white=True)[source]¶

Make multiple distinct colors.

The legacy variant is based on a stack overflow post [HowToDistinct], but the modern variant is based on the

distinctipypackage.References

- HowToDistinct

https://stackoverflow.com/questions/470690/how-to-automatically-generate-n-distinct-colors

- Returns

list of distinct float color values

- Return type

List[Tuple]

Example

>>> # xdoctest: +REQUIRES(module:matplotlib) >>> from kwimage.im_color import * # NOQA >>> import kwimage >>> colors1 = kwimage.Color.distinct(5, legacy=False) >>> colors2 = kwimage.Color.distinct(3, existing=colors1) >>> # xdoctest: +REQUIRES(module:kwplot) >>> # xdoctest: +REQUIRES(--show) >>> from kwimage.im_color import _draw_color_swatch >>> swatch1 = _draw_color_swatch(colors1, cellshape=9) >>> swatch2 = _draw_color_swatch(colors1 + colors2, cellshape=9) >>> import kwplot >>> kwplot.autompl() >>> kwplot.imshow(swatch1, pnum=(1, 2, 1), fnum=1) >>> kwplot.imshow(swatch2, pnum=(1, 2, 2), fnum=1) >>> kwplot.show_if_requested()

- class kwimage.Coords(data=None, meta=None)[source]¶

-

A data structure to store n-dimensional coordinate geometry.

Currently it is up to the user to maintain what coordinate system this geometry belongs to.

Note

This class was designed to hold coordinates in r/c format, but in general this class is anostic to dimension ordering as long as you are consistent. However, there are two places where this matters: (1) drawing and (2) gdal/imgaug-warping. In these places we will assume x/y for legacy reasons. This may change in the future.

The term axes with resepct to

Coordsalways refers to the final numpy axis. In other words the final numpy-axis represents ALL of the coordinate-axes.CommandLine

xdoctest -m kwimage.structs.coords Coords

Example

>>> from kwimage.structs.coords import * # NOQA >>> import kwarray >>> rng = kwarray.ensure_rng(0) >>> self = Coords.random(num=4, dim=3, rng=rng) >>> print('self = {}'.format(self)) self = <Coords(data= array([[0.5488135 , 0.71518937, 0.60276338], [0.54488318, 0.4236548 , 0.64589411], [0.43758721, 0.891773 , 0.96366276], [0.38344152, 0.79172504, 0.52889492]]))> >>> matrix = rng.rand(4, 4) >>> self.warp(matrix) <Coords(data= array([[0.71037426, 1.25229659, 1.39498435], [0.60799503, 1.26483447, 1.42073131], [0.72106004, 1.39057144, 1.38757508], [0.68384299, 1.23914654, 1.29258196]]))> >>> self.translate(3, inplace=True) <Coords(data= array([[3.5488135 , 3.71518937, 3.60276338], [3.54488318, 3.4236548 , 3.64589411], [3.43758721, 3.891773 , 3.96366276], [3.38344152, 3.79172504, 3.52889492]]))> >>> self.translate(3, inplace=True) <Coords(data= array([[6.5488135 , 6.71518937, 6.60276338], [6.54488318, 6.4236548 , 6.64589411], [6.43758721, 6.891773 , 6.96366276], [6.38344152, 6.79172504, 6.52889492]]))> >>> self.scale(2) <Coords(data= array([[13.09762701, 13.43037873, 13.20552675], [13.08976637, 12.8473096 , 13.29178823], [12.87517442, 13.783546 , 13.92732552], [12.76688304, 13.58345008, 13.05778984]]))> >>> # xdoctest: +REQUIRES(module:torch) >>> self.tensor() >>> self.tensor().tensor().numpy().numpy() >>> self.numpy() >>> #self.draw_on()

- property dtype¶

- property dim¶

- property shape¶

- classmethod random(num=1, dim=2, rng=None, meta=None)[source]¶

Makes random coordinates; typically for testing purposes

- compress(flags, axis=0, inplace=False)[source]¶

Filters items based on a boolean criterion

- Parameters

flags (ArrayLike) – true for items to be kept. Extended type: ArrayLike[bool].

axis (int) – you usually want this to be 0

inplace (bool) – if True, modifies this object

- Returns

filtered coords

- Return type

Example

>>> import kwimage >>> self = kwimage.Coords.random(10, rng=0) >>> self.compress([True] * len(self)) >>> self.compress([False] * len(self)) <Coords(data=array([], shape=(0, 2), dtype=float64))> >>> # xdoctest: +REQUIRES(module:torch) >>> self = self.tensor() >>> self.compress([True] * len(self)) >>> self.compress([False] * len(self))

- take(indices, axis=0, inplace=False)[source]¶

Takes a subset of items at specific indices

- Parameters

indices (ArrayLike) – indexes of items to take. Extended type ArrayLike[int].

axis (int) – you usually want this to be 0

inplace (bool) – if True, modifies this object

- Returns

filtered coords

- Return type

Example

>>> import kwimage >>> self = kwimage.Coords(np.array([[25, 30, 15, 10]])) >>> self.take([0]) <Coords(data=array([[25, 30, 15, 10]]))> >>> self.take([]) <Coords(data=array([], shape=(0, 4), dtype=int64))>

- astype(dtype, inplace=False)[source]¶

Changes the data type

- Parameters

dtype – new type

inplace (bool) – if True, modifies this object

- Returns

modified coordinates

- Return type

- round(decimals=0, inplace=False)[source]¶

Rounds data to the specified decimal place

- Parameters

inplace (bool) – if True, modifies this object

decimals (int) – number of decimal places to round to

- Returns

modified coordinates

- Return type

Example

>>> import kwimage >>> self = kwimage.Coords.random(3).scale(10) >>> self.round()

- view(*shape)[source]¶

Passthrough method to view or reshape

- Parameters

*shape – new shape of the data

- Returns

modified coordinates

- Return type

Example

>>> self = Coords.random(6, dim=4).numpy() >>> assert list(self.view(3, 2, 4).data.shape) == [3, 2, 4] >>> # xdoctest: +REQUIRES(module:torch) >>> self = Coords.random(6, dim=4).tensor() >>> assert list(self.view(3, 2, 4).data.shape) == [3, 2, 4]

- classmethod concatenate(coords, axis=0)[source]¶

Concatenates lists of coordinates together

- Parameters

coords (Sequence[Coords]) – list of coords to concatenate

axis (int) – axis to stack on. Defaults to 0.

- Returns

stacked coords

- Return type

CommandLine

xdoctest -m kwimage.structs.coords Coords.concatenate

Example

>>> coords = [Coords.random(3) for _ in range(3)] >>> new = Coords.concatenate(coords) >>> assert len(new) == 9 >>> assert np.all(new.data[3:6] == coords[1].data)

- property device¶

If the backend is torch returns the data device, otherwise None

- tensor(device=NoParam)[source]¶

Converts numpy to tensors. Does not change memory if possible.

- Returns

modified coordinates

- Return type

Example

>>> # xdoctest: +REQUIRES(module:torch) >>> self = Coords.random(3).numpy() >>> newself = self.tensor() >>> self.data[0, 0] = 0 >>> assert newself.data[0, 0] == 0 >>> self.data[0, 0] = 1 >>> assert self.data[0, 0] == 1

- numpy()[source]¶

Converts tensors to numpy. Does not change memory if possible.

- Returns

modified coordinates

- Return type

Example

>>> # xdoctest: +REQUIRES(module:torch) >>> self = Coords.random(3).tensor() >>> newself = self.numpy() >>> self.data[0, 0] = 0 >>> assert newself.data[0, 0] == 0 >>> self.data[0, 0] = 1 >>> assert self.data[0, 0] == 1

- reorder_axes(new_order, inplace=False)[source]¶

Change the ordering of the coordinate axes.

- Parameters

new_order (Tuple[int]) –

new_order[i]should specify which axes in the original coordinates should be mapped to thei-thposition in the returned axes.inplace (bool) – if True, modifies data inplace

- Returns

modified coordinates

- Return type

Note

This is the ordering of the “columns” in final numpy axis, not the numpy axes themselves.

Example

>>> from kwimage.structs.coords import * # NOQA >>> self = Coords(data=np.array([ >>> [7, 11], >>> [13, 17], >>> [21, 23], >>> ])) >>> new = self.reorder_axes((1, 0)) >>> print('new = {!r}'.format(new)) new = <Coords(data= array([[11, 7], [17, 13], [23, 21]]))>

Example

>>> from kwimage.structs.coords import * # NOQA >>> self = Coords.random(10, rng=0) >>> new = self.reorder_axes((1, 0)) >>> # Remapping using 1, 0 reverses the axes >>> assert np.all(new.data[:, 0] == self.data[:, 1]) >>> assert np.all(new.data[:, 1] == self.data[:, 0]) >>> # Remapping using 0, 1 does nothing >>> eye = self.reorder_axes((0, 1)) >>> assert np.all(eye.data == self.data) >>> # Remapping using 0, 0, destroys the 1-th column >>> bad = self.reorder_axes((0, 0)) >>> assert np.all(bad.data[:, 0] == self.data[:, 0]) >>> assert np.all(bad.data[:, 1] == self.data[:, 0])

- warp(transform, input_dims=None, output_dims=None, inplace=False)[source]¶

Generalized coordinate transform.

- Parameters

transform (GeometricTransform | ArrayLike | Augmenter | Callable) – scikit-image tranform, a 3x3 transformation matrix, an imgaug Augmenter, or generic callable which transforms an NxD ndarray.

input_dims (Tuple) – shape of the image these objects correspond to (only needed / used when transform is an imgaug augmenter)

output_dims (Tuple) – unused in non-raster structures, only exists for compatibility.

inplace (bool) – if True, modifies data inplace

- Returns

modified coordinates

- Return type

Note

Let D = self.dims

- transformation matrices can be either:

(D + 1) x (D + 1) # for homog

D x D # for scale / rotate

D x (D + 1) # for affine

Example

>>> from kwimage.structs.coords import * # NOQA >>> self = Coords.random(10, rng=0) >>> transform = skimage.transform.AffineTransform(scale=(2, 2)) >>> new = self.warp(transform) >>> assert np.all(new.data == self.scale(2).data)

Doctest

>>> self = Coords.random(10, rng=0) >>> assert np.all(self.warp(np.eye(3)).data == self.data) >>> assert np.all(self.warp(np.eye(2)).data == self.data)

Doctest

>>> # xdoctest: +REQUIRES(module:osgeo) >>> from osgeo import osr >>> wgs84_crs = osr.SpatialReference() >>> wgs84_crs.ImportFromEPSG(4326) >>> dst_crs = osr.SpatialReference() >>> dst_crs.ImportFromEPSG(2927) >>> transform = osr.CoordinateTransformation(wgs84_crs, dst_crs) >>> self = Coords.random(10, rng=0) >>> new = self.warp(transform) >>> assert np.all(new.data != self.data)

>>> # Alternative using generic func >>> def _gdal_coord_tranform(pts): ... return np.array([transform.TransformPoint(x, y, 0)[0:2] ... for x, y in pts]) >>> alt = self.warp(_gdal_coord_tranform) >>> assert np.all(alt.data != self.data) >>> assert np.all(alt.data == new.data)

Doctest

>>> # can use a generic function >>> def func(xy): ... return np.zeros_like(xy) >>> self = Coords.random(10, rng=0) >>> assert np.all(self.warp(func).data == 0)

- to_imgaug(input_dims)[source]¶

Translate to an imgaug object

- Returns

imgaug data structure

- Return type

imgaug.KeypointsOnImage

Example

>>> # xdoctest: +REQUIRES(module:imgaug) >>> import kwimage >>> import numpy as np >>> self = kwimage.Coords.random(10) >>> input_dims = (10, 10) >>> kpoi = self.to_imgaug(input_dims) >>> new = kwimage.Coords.from_imgaug(kpoi) >>> assert np.allclose(new.data, self.data)

- scale(factor, about=None, output_dims=None, inplace=False)[source]¶

Scale coordinates by a factor

- Parameters

factor (float | Tuple[float, float]) – scale factor as either a scalar or per-dimension tuple.

about (Tuple | None) – if unspecified scales about the origin (0, 0), otherwise the rotation is about this point.

output_dims (Tuple) – unused in non-raster spatial structures

inplace (bool) – if True, modifies data inplace

- Returns

modified coordinates

- Return type

Example

>>> from kwimage.structs.coords import * # NOQA >>> self = Coords.random(10, rng=0) >>> new = self.scale(10) >>> assert new.data.max() <= 10

>>> self = Coords.random(10, rng=0) >>> self.data = (self.data * 10).astype(int) >>> new = self.scale(10) >>> assert new.data.dtype.kind == 'i' >>> new = self.scale(10.0) >>> assert new.data.dtype.kind == 'f'

- translate(offset, output_dims=None, inplace=False)[source]¶

Shift the coordinates

- Parameters

offset (float | Tuple[float, float]) – transation offset as either a scalar or a per-dimension tuple.

output_dims (Tuple) – unused in non-raster spatial structures

inplace (bool) – if True, modifies data inplace

- Returns

modified coordinates

- Return type

Example

>>> from kwimage.structs.coords import * # NOQA >>> self = Coords.random(10, dim=3, rng=0) >>> new = self.translate(10) >>> assert new.data.min() >= 10 >>> assert new.data.max() <= 11 >>> Coords.random(3, dim=3, rng=0) >>> Coords.random(3, dim=3, rng=0).translate((1, 2, 3))

- rotate(theta, about=None, output_dims=None, inplace=False)[source]¶

Rotate the coordinates about a point.

- Parameters

theta (float) – rotation angle in radians

about (Tuple | None) – if unspecified rotates about the origin (0, 0), otherwise the rotation is about this point.

output_dims (Tuple) – unused in non-raster spatial structures

inplace (bool) – if True, modifies data inplace

- Returns

modified coordinates

- Return type

Todo

[ ] Generalized ND Rotations?

References

https://math.stackexchange.com/questions/197772/gen-rot-matrix

Example

>>> from kwimage.structs.coords import * # NOQA >>> self = Coords.random(10, dim=2, rng=0) >>> theta = np.pi / 2 >>> new = self.rotate(theta)

>>> # Test rotate agrees with warp >>> sin_ = np.sin(theta) >>> cos_ = np.cos(theta) >>> rot_ = np.array([[cos_, -sin_], [sin_, cos_]]) >>> new2 = self.warp(rot_) >>> assert np.allclose(new.data, new2.data)

>>> # >>> # Rotate about a custom point >>> theta = np.pi / 2 >>> new3 = self.rotate(theta, about=(0.5, 0.5)) >>> # >>> # Rotate about the center of mass >>> about = self.data.mean(axis=0) >>> new4 = self.rotate(theta, about=about) >>> # xdoc: +REQUIRES(--show) >>> # xdoc: +REQUIRES(module:kwplot) >>> import kwplot >>> kwplot.figure(fnum=1, doclf=True) >>> plt = kwplot.autoplt() >>> self.draw(radius=0.01, color='blue', alpha=.5, coord_axes=[1, 0], setlim='grow') >>> plt.gca().set_aspect('equal') >>> new3.draw(radius=0.01, color='red', alpha=.5, coord_axes=[1, 0], setlim='grow')

- fill(image, value, coord_axes=None, interp='bilinear')[source]¶

Sets sub-coordinate locations in a grid to a particular value

- Parameters

coord_axes (Tuple) – specify which image axes each coordinate dim corresponds to. For 2D images, if you are storing r/c data, set to [0,1], if you are storing x/y data, set to [1,0].

- Returns

image with coordinates rasterized on it

- Return type

ndarray

- soft_fill(image, coord_axes=None, radius=5)[source]¶

Used for drawing keypoint truth in heatmaps

- Parameters

coord_axes (Tuple) – specify which image axes each coordinate dim corresponds to. For 2D images, if you are storing r/c data, set to [0,1], if you are storing x/y data, set to [1,0].

In other words the i-th entry in coord_axes specifies which row-major spatial dimension the i-th column of a coordinate corresponds to. The index is the coordinate dimension and the value is the axes dimension.

- Returns

image with coordinates rasterized on it

- Return type

ndarray

References

https://stackoverflow.com/questions/54726703/generating-keypoint-heatmaps-in-tensorflow

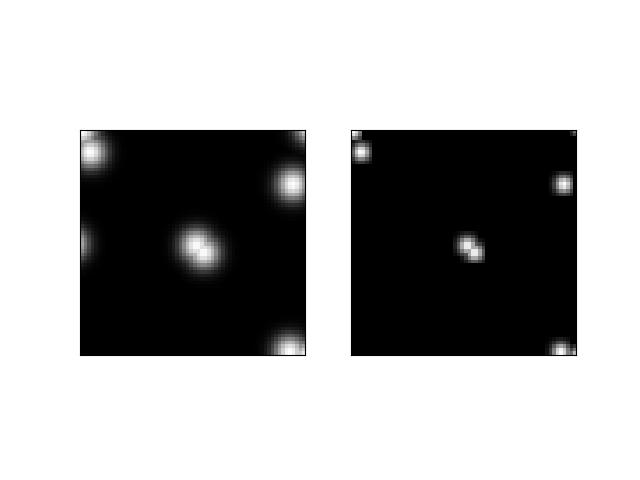

Example

>>> from kwimage.structs.coords import * # NOQA >>> s = 64 >>> self = Coords.random(10, meta={'shape': (s, s)}).scale(s) >>> # Put points on edges to to verify "edge cases" >>> self.data[1] = [0, 0] # top left >>> self.data[2] = [s, s] # bottom right >>> self.data[3] = [0, s + 10] # bottom left >>> self.data[4] = [-3, s // 2] # middle left >>> self.data[5] = [s + 1, -1] # top right >>> # Put points in the middle to verify overlap blending >>> self.data[6] = [32.5, 32.5] # middle >>> self.data[7] = [34.5, 34.5] # middle >>> fill_value = 1 >>> coord_axes = [1, 0] >>> radius = 10 >>> image1 = np.zeros((s, s)) >>> self.soft_fill(image1, coord_axes=coord_axes, radius=radius) >>> radius = 3.0 >>> image2 = np.zeros((s, s)) >>> self.soft_fill(image2, coord_axes=coord_axes, radius=radius) >>> # xdoc: +REQUIRES(--show) >>> # xdoc: +REQUIRES(module:kwplot) >>> import kwplot >>> kwplot.autompl() >>> kwplot.imshow(image1, pnum=(1, 2, 1)) >>> kwplot.imshow(image2, pnum=(1, 2, 2))

- draw_on(image=None, fill_value=1, coord_axes=[1, 0], interp='bilinear')[source]¶

Note

unlike other methods, the defaults assume x/y internal data

- Parameters

coord_axes (Tuple) – specify which image axes each coordinate dim corresponds to. For 2D images, if you are storing r/c data, set to [0,1], if you are storing x/y data, set to [1,0].

In other words the i-th entry in coord_axes specifies which row-major spatial dimension the i-th column of a coordinate corresponds to. The index is the coordinate dimension and the value is the axes dimension.

- Returns

image with coordinates drawn on it

- Return type

ndarray

Example

>>> # xdoc: +REQUIRES(module:kwplot) >>> from kwimage.structs.coords import * # NOQA >>> s = 256 >>> self = Coords.random(10, meta={'shape': (s, s)}).scale(s) >>> self.data[0] = [10, 10] >>> self.data[1] = [20, 40] >>> image = np.zeros((s, s)) >>> fill_value = 1 >>> image = self.draw_on(image, fill_value, coord_axes=[1, 0], interp='bilinear') >>> # image = self.draw_on(image, fill_value, coord_axes=[0, 1], interp='nearest') >>> # image = self.draw_on(image, fill_value, coord_axes=[1, 0], interp='bilinear') >>> # image = self.draw_on(image, fill_value, coord_axes=[1, 0], interp='nearest') >>> # xdoc: +REQUIRES(--show) >>> # xdoc: +REQUIRES(module:kwplot) >>> import kwplot >>> kwplot.autompl() >>> kwplot.figure(fnum=1, doclf=True) >>> kwplot.imshow(image) >>> self.draw(radius=3, alpha=.5, coord_axes=[1, 0])

- draw(color='blue', ax=None, alpha=None, coord_axes=[1, 0], radius=1, setlim=False)[source]¶

Draw these coordinates via matplotlib

Note

unlike other methods, the defaults assume x/y internal data

- Parameters

setlim (bool) – if True ensures the limits of the axes contains the polygon

coord_axes (Tuple) – specify which image axes each coordinate dim corresponds to. For 2D images, if you are storing r/c data, set to [0,1], if you are storing x/y data, set to [1,0].

- Returns

drawn matplotlib objects

- Return type

List[mpl.collections.PatchCollection]

Example

>>> # xdoc: +REQUIRES(module:kwplot) >>> from kwimage.structs.coords import * # NOQA >>> self = Coords.random(10) >>> # xdoc: +REQUIRES(--show) >>> import kwplot >>> plt = kwplot.autoplt() >>> self.draw(radius=0.05, alpha=0.8) >>> plt.gca().set_xlim(0, 1) >>> plt.gca().set_ylim(0, 1) >>> plt.gca().set_aspect('equal')

- class kwimage.Detections(data=None, meta=None, datakeys=None, metakeys=None, checks=True, **kwargs)[source]¶

Bases:

NiceRepr,_DetAlgoMixin,_DetDrawMixinContainer for holding and manipulating multiple detections.

- Variables

data (Dict) –

dictionary containing corresponding lists. The length of each list is the number of detections. This contains the bounding boxes, confidence scores, and class indices. Details of the most common keys and types are as follows:

boxes (kwimage.Boxes[ArrayLike]): multiple bounding boxes scores (ArrayLike): associated scores class_idxs (ArrayLike): associated class indices segmentations (ArrayLike): segmentations masks for each box, members can be

MaskorMultiPolygon. keypoints (ArrayLike): keypoints for each box. Members should bePoints.Additional custom keys may be specified as long as (a) the values are array-like and the first axis corresponds to the standard data values and (b) are custom keys are listed in the datakeys kwargs when constructing the Detections.

meta (Dict) – This contains contextual information about the detections. This includes the class names, which can be indexed into via the class indexes.

Example

>>> import kwimage >>> dets = kwimage.Detections( >>> # there are expected keys that do not need registration >>> boxes=kwimage.Boxes.random(3), >>> class_idxs=[0, 1, 1], >>> classes=['a', 'b'], >>> # custom data attrs must align with boxes >>> myattr1=np.random.rand(3), >>> myattr2=np.random.rand(3, 2, 8), >>> # there are no restrictions on metadata >>> mymeta='a custom metadata string', >>> # Note that any key not in kwimage.Detections.__datakeys__ or >>> # kwimage.Detections.__metakeys__ must be registered at the >>> # time of construction. >>> datakeys=['myattr1', 'myattr2'], >>> metakeys=['mymeta'], >>> checks=True, >>> ) >>> print('dets = {}'.format(dets)) dets = <Detections(3)>

- classmethod coerce(data=None, **kwargs)[source]¶

The “try-anything to get what I want” constructor

- Parameters

data

**kwargs – currently boxes and cnames

Example

>>> from kwimage.structs.detections import * # NOQA >>> import kwimage >>> kwargs = dict( >>> boxes=kwimage.Boxes.random(4), >>> cnames=['a', 'b', 'c', 'c'], >>> ) >>> data = {} >>> self = kwimage.Detections.coerce(data, **kwargs)

- classmethod from_coco_annots(anns, cats=None, classes=None, kp_classes=None, shape=None, dset=None)[source]¶

Create a Detections object from a list of coco-like annotations.

- Parameters

anns (List[Dict]) – list of coco-like annotation objects

dset (kwcoco.CocoDataset) – if specified, cats, classes, and kp_classes can are ignored.

cats (List[Dict]) – coco-format category information. Used only if dset is not specified.

classes (kwcoco.CategoryTree) – category tree with coco class info. Used only if dset is not specified.

kp_classes (kwcoco.CategoryTree) – keypoint category tree with coco keypoint class info. Used only if dset is not specified.

shape (tuple) – shape of parent image

- Returns

a detections object

- Return type

Example

>>> from kwimage.structs.detections import * # NOQA >>> # xdoctest: +REQUIRES(--module:ndsampler) >>> anns = [{ >>> 'id': 0, >>> 'image_id': 1, >>> 'category_id': 2, >>> 'bbox': [2, 3, 10, 10], >>> 'keypoints': [4.5, 4.5, 2], >>> 'segmentation': { >>> 'counts': '_11a04M2O0O20N101N3L_5', >>> 'size': [20, 20], >>> }, >>> }] >>> dataset = { >>> 'images': [], >>> 'annotations': [], >>> 'categories': [ >>> {'id': 0, 'name': 'background'}, >>> {'id': 2, 'name': 'class1', 'keypoints': ['spot']} >>> ] >>> } >>> #import ndsampler >>> #dset = ndsampler.CocoDataset(dataset) >>> cats = dataset['categories'] >>> dets = Detections.from_coco_annots(anns, cats)

Example

>>> # xdoctest: +REQUIRES(--module:ndsampler) >>> # Test case with no category information >>> from kwimage.structs.detections import * # NOQA >>> anns = [{ >>> 'id': 0, >>> 'image_id': 1, >>> 'category_id': None, >>> 'bbox': [2, 3, 10, 10], >>> 'prob': [.1, .9], >>> }] >>> cats = [ >>> {'id': 0, 'name': 'background'}, >>> {'id': 2, 'name': 'class1'} >>> ] >>> dets = Detections.from_coco_annots(anns, cats)

Example

>>> import kwimage >>> # xdoctest: +REQUIRES(--module:ndsampler) >>> import ndsampler >>> sampler = ndsampler.CocoSampler.demo('photos') >>> iminfo, anns = sampler.load_image_with_annots(1) >>> shape = iminfo['imdata'].shape[0:2] >>> kp_classes = sampler.dset.keypoint_categories() >>> dets = kwimage.Detections.from_coco_annots( >>> anns, sampler.dset.dataset['categories'], sampler.catgraph, >>> kp_classes, shape=shape)

- to_coco(cname_to_cat=None, style='orig', image_id=None, dset=None)[source]¶

Converts this set of detections into coco-like annotation dictionaries.

Note

Not all aspects of the MS-COCO format can be accurately represented, so some liberties are taken. The MS-COCO standard defines that annotations should specifiy a category_id field, but in some cases this information is not available so we will populate a ‘category_name’ field if possible and in the worst case fall back to ‘category_index’.

Additionally, detections may contain additional information beyond the MS-COCO standard, and this information (e.g. weight, prob, score) is added as forign fields.

- Parameters

cname_to_cat – currently ignored.

style (str) – either ‘orig’ (for the original coco format) or ‘new’ for the more general kwcoco-style coco format. Defaults to ‘orig’

image_id (int) – if specified, populates the image_id field of each image.

dset (kwcoco.CocoDataset | None) – if specified, attempts to populate the category_id field to be compatible with this coco dataset.

- Yields

dict – coco-like annotation structures

Example

>>> # xdoctest: +REQUIRES(module:ndsampler) >>> from kwimage.structs.detections import * >>> self = Detections.demo()[0] >>> cname_to_cat = None >>> list(self.to_coco())

- property boxes¶

- property class_idxs¶

- property scores¶

typically only populated for predicted detections

- property probs¶

typically only populated for predicted detections

- property weights¶

typically only populated for groundtruth detections

- property classes¶

- warp(transform, input_dims=None, output_dims=None, inplace=False)[source]¶

Spatially warp the detections.

- Parameters

transform (kwimage.Affine | ndarray | Callable | Any) – Something coercable to a transform. Usually a kwimage.Affine object

input_dims (Tuple[int, int]) – shape of the expected input canvas

output_dims (Tuple[int, int]) – shape of the expected output canvas

inplace (bool) – if true operate inplace

- Returns

the warped detections object

- Return type

Example

>>> import skimage >>> transform = skimage.transform.AffineTransform(scale=(2, 3), translation=(4, 5)) >>> self = Detections.random(2) >>> new = self.warp(transform) >>> assert new.boxes == self.boxes.warp(transform) >>> assert new != self

- scale(factor, output_dims=None, inplace=False)[source]¶

Spatially scale the detections.

Example

>>> import skimage >>> transform = skimage.transform.AffineTransform(scale=(2, 3), translation=(4, 5)) >>> self = Detections.random(2) >>> new = self.warp(transform) >>> assert new.boxes == self.boxes.warp(transform) >>> assert new != self

- translate(offset, output_dims=None, inplace=False)[source]¶

Spatially translate the detections.

Example

>>> import skimage >>> self = Detections.random(2) >>> new = self.translate(10)

- classmethod concatenate(dets)[source]¶

- Parameters

boxes (Sequence[Detections]) – list of detections to concatenate

- Returns

stacked detections

- Return type

Example

>>> self = Detections.random(2) >>> other = Detections.random(3) >>> dets = [self, other] >>> new = Detections.concatenate(dets) >>> assert new.num_boxes() == 5

>>> self = Detections.random(2, segmentations=True) >>> other = Detections.random(3, segmentations=True) >>> dets = [self, other] >>> new = Detections.concatenate(dets) >>> assert new.num_boxes() == 5

- argsort(reverse=True)[source]¶

Sorts detection indices by descending (or ascending) scores

- Returns

sorted indices torch.Tensor: sorted indices if using torch backends

- Return type

ndarray[Shape[‘*’], Integer]

- sort(reverse=True)[source]¶

Sorts detections by descending (or ascending) scores

- Returns

sorted copy of self

- Return type

- compress(flags, axis=0)[source]¶

Returns a subset where corresponding locations are True.

- Parameters

flags (ndarray[Any, Bool] | torch.Tensor) – mask marking selected items

- Returns

subset of self

- Return type

CommandLine

xdoctest -m kwimage.structs.detections Detections.compress

Example

>>> # xdoctest: +REQUIRES(module:torch) >>> import kwimage >>> dets = kwimage.Detections.random(keypoints='dense') >>> flags = np.random.rand(len(dets)) > 0.5 >>> subset = dets.compress(flags) >>> assert len(subset) == flags.sum() >>> subset = dets.tensor().compress(flags) >>> assert len(subset) == flags.sum()

- take(indices, axis=0)[source]¶

Returns a subset specified by indices

- Parameters

indices (ndarray[Any, Integer]) – indices to select

- Returns

subset of self

- Return type

Example

>>> import kwimage >>> dets = kwimage.Detections(boxes=kwimage.Boxes.random(10)) >>> subset = dets.take([2, 3, 5, 7]) >>> assert len(subset) == 4 >>> # xdoctest: +REQUIRES(module:torch) >>> subset = dets.tensor().take([2, 3, 5, 7]) >>> assert len(subset) == 4

- property device¶

If the backend is torch returns the data device, otherwise None

- numpy()[source]¶

Converts tensors to numpy. Does not change memory if possible.

Example

>>> # xdoctest: +REQUIRES(module:torch) >>> self = Detections.random(3).tensor() >>> newself = self.numpy() >>> self.scores[0] = 0 >>> assert newself.scores[0] == 0 >>> self.scores[0] = 1 >>> assert self.scores[0] == 1 >>> self.numpy().numpy()

- property dtype¶

- tensor(device=NoParam)[source]¶

Converts numpy to tensors. Does not change memory if possible.

Example

>>> # xdoctest: +REQUIRES(module:torch) >>> from kwimage.structs.detections import * >>> self = Detections.random(3) >>> newself = self.tensor() >>> self.scores[0] = 0 >>> assert newself.scores[0] == 0 >>> self.scores[0] = 1 >>> assert self.scores[0] == 1 >>> self.tensor().tensor()

- classmethod random(num=10, scale=1.0, classes=3, keypoints=False, segmentations=False, tensor=False, rng=None)[source]¶

Creates dummy data, suitable for use in tests and benchmarks

- Parameters

num (int) – number of boxes

scale (float | tuple) – bounding image size. Defaults to 1.0

classes (int | Sequence) – list of class labels or number of classes

keypoints (bool) – if True include random keypoints for each box. Defaults to False.

segmentations (bool) – if True include random segmentations for each box. Defaults to False.

tensor (bool) – determines backend. DEPRECATED. Call

.tensor()on resulting object instead.rng (np.random.RandomState) – random state

Example

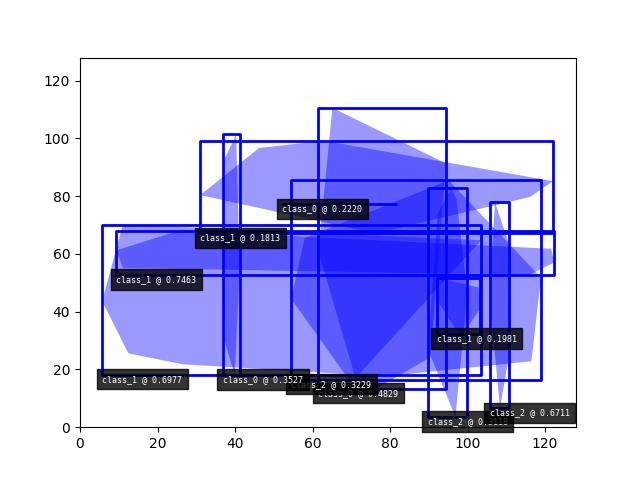

>>> import kwimage >>> dets = kwimage.Detections.random(keypoints='jagged') >>> dets.data['keypoints'].data[0].data >>> dets.data['keypoints'].meta >>> dets = kwimage.Detections.random(keypoints='dense') >>> dets = kwimage.Detections.random(keypoints='dense', segmentations=True).scale(1000) >>> # xdoctest:+REQUIRES(--show) >>> import kwplot >>> kwplot.autompl() >>> dets.draw(setlim=True)

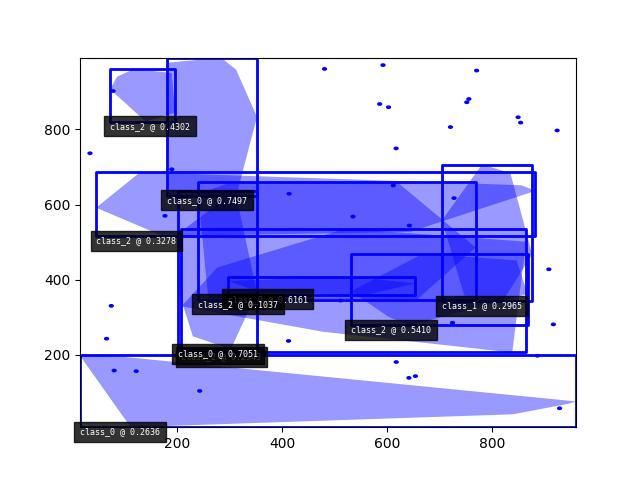

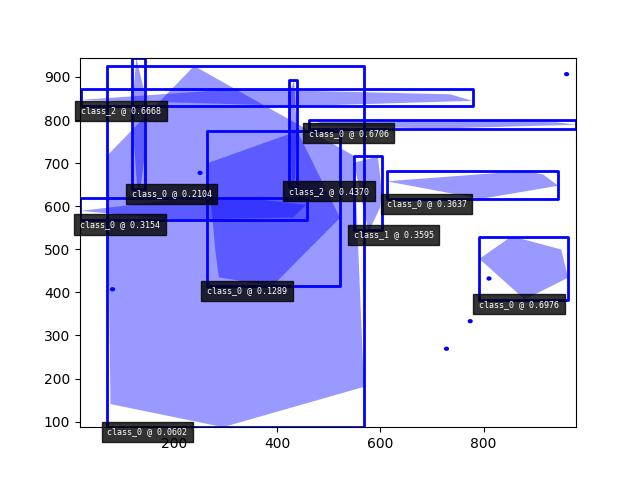

Example

>>> import kwimage >>> dets = kwimage.Detections.random( >>> keypoints='jagged', segmentations=True, rng=0).scale(1000) >>> print('dets = {}'.format(dets)) dets = <Detections(10)> >>> dets.data['boxes'].quantize(inplace=True) >>> print('dets.data = {}'.format(ub.repr2( >>> dets.data, nl=1, with_dtype=False, strvals=True))) dets.data = { 'boxes': <Boxes(xywh, array([[548, 544, 55, 172], [423, 645, 15, 247], [791, 383, 173, 146], [ 71, 87, 498, 839], [ 20, 832, 759, 39], [461, 780, 518, 20], [118, 639, 26, 306], [264, 414, 258, 361], [ 18, 568, 439, 50], [612, 616, 332, 66]], dtype=int32))>, 'class_idxs': [1, 2, 0, 0, 2, 0, 0, 0, 0, 0], 'keypoints': <PointsList(n=10)>, 'scores': [0.3595079 , 0.43703195, 0.6976312 , 0.06022547, 0.66676672, 0.67063787,0.21038256, 0.1289263 , 0.31542835, 0.36371077], 'segmentations': <SegmentationList(n=10)>, } >>> # xdoctest:+REQUIRES(--show) >>> import kwplot >>> kwplot.autompl() >>> dets.draw(setlim=True)

Example

>>> # Boxes position/shape within 0-1 space should be uniform. >>> # xdoctest: +REQUIRES(--show) >>> import kwplot >>> kwplot.autompl() >>> fig = kwplot.figure(fnum=1, doclf=True) >>> fig.gca().set_xlim(0, 128) >>> fig.gca().set_ylim(0, 128) >>> import kwimage >>> kwimage.Detections.random(num=10, segmentations=True).scale(128).draw()

- class kwimage.Heatmap(data=None, meta=None, **kwargs)[source]¶

Bases:

Spatial,_HeatmapDrawMixin,_HeatmapWarpMixin,_HeatmapAlgoMixinKeeps track of a downscaled heatmap and how to transform it to overlay the original input image. Heatmaps generally are used to estimate class probabilites at each pixel. This data struction additionally contains logic to augment pixel with offset (dydx) and scale (diamter) information.

- Variables

data (Dict[str, ArrayLike]) –

dictionary containing spatially aligned heatmap data. Valid keys are as follows.

- class_probs (ArrayLike[C, H, W] | ArrayLike[C, D, H, W]):

A probability map for each class. C is the number of classes.

- offset (ArrayLike[2, H, W] | ArrayLike[3, D, H, W], optional):

object center position offset in y,x / t,y,x coordinates

- diamter (ArrayLike[2, H, W] | ArrayLike[3, D, H, W], optional):

object bounding box sizes in h,w / d,h,w coordinates

- keypoints (ArrayLike[2, K, H, W] | ArrayLike[3, K, D, H, W], optional):

y/x offsets for K different keypoint classes

dictionary containing miscellanious metadata about the heatmap data. Valid keys are as follows.

- img_dims (Tuple[H, W] | Tuple[D, H, W]):

original image dimension

- tf_data_to_image (skimage.transform._geometric.GeometricTransform):

transformation matrix (typically similarity or affine) that projects the given, heatmap onto the image dimensions such that the image and heatmap are spatially aligned.

- classes (List[str] | ndsampler.CategoryTree):

information about which index in

data['class_probs']corresponds to which semantic class.

dims (Tuple) – dimensions of the heatmap (See

image_dims) for the original image dimensions.**kwargs – any key that is accepted by the data or meta dictionaries can be specified as a keyword argument to this class and it will be properly placed in the appropriate internal dictionary.

CommandLine

xdoctest -m ~/code/kwimage/kwimage/structs/heatmap.py Heatmap --show

Example

>>> # xdoctest: +REQUIRES(module:torch) >>> from kwimage.structs.heatmap import * # NOQA >>> import kwimage >>> class_probs = kwimage.grab_test_image(dsize=(32, 32), space='gray')[None, ..., 0] / 255.0 >>> img_dims = (220, 220) >>> tf_data_to_img = skimage.transform.AffineTransform(translation=(-18, -18), scale=(8, 8)) >>> self = Heatmap(class_probs=class_probs, img_dims=img_dims, >>> tf_data_to_img=tf_data_to_img) >>> aligned = self.upscale() >>> # xdoctest: +REQUIRES(--show) >>> import kwplot >>> kwplot.autompl() >>> kwplot.imshow(aligned[0]) >>> kwplot.show_if_requested()

Example

>>> # xdoctest: +REQUIRES(module:torch) >>> import kwimage >>> self = Heatmap.random() >>> # xdoctest: +REQUIRES(--show) >>> import kwplot >>> kwplot.autompl() >>> self.draw()

- property shape¶

- property bounds¶

- property dims¶

space-time dimensions of this heatmap

- classmethod random(dims=(10, 10), classes=3, diameter=True, offset=True, keypoints=False, img_dims=None, dets=None, nblips=10, noise=0.0, smooth_k=3, rng=None, ensure_background=True)[source]¶

Creates dummy data, suitable for use in tests and benchmarks

- Parameters

dims (Tuple[int, int]) – dimensions of the heatmap

classes (int | List[str] | kwcoco.CategoryTree) – foreground classes

diameter (bool) – if True, include a “diameter” heatmap

offset (bool) – if True, include an “offset” heatmap

keypoints (bool)

smooth_k (int) – kernel size for gaussian blur to smooth out the heatmaps.

img_dims (Tuple) – dimensions of an upscaled image the heatmap corresponds to. (This should be removed and simply handled with a transform

in the future).

- Returns